The Problem

- Azure Explorer is uploading too slow

- AZCopy copy feature will not upload. Error: “RESPONSE Status: 403 This request is not authorized to perform this operation using this permission.”

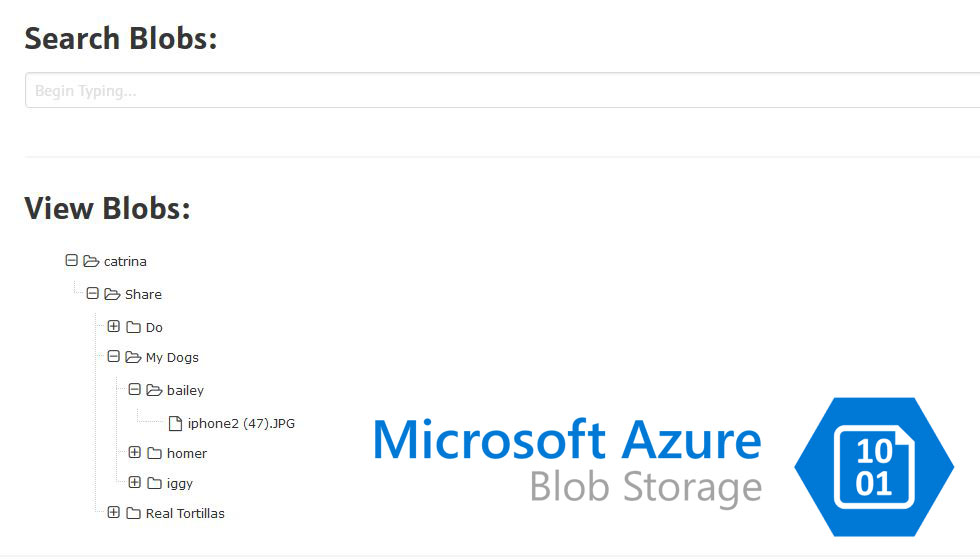

Today, I was trying to upload a directory (1.3gb) of images up for blob storage for public access. Though I often use Azure Storage Explorer for smaller uploads, it was proving impossible with this large directory. My connection came to a grinding halt and the files where taking impossibly too long to upload.

So, upon research, I learned that AZCopy is much more efficient with larger data transfers and after success, I found this to be true. However, using a direct URL as per the docs was giving me a 403 error, despite me being owner of container and container seeming to have proper permissions. So I decided to use an SAS key at end of URL (also in docs) and the apply the azcopy copy command.

Below is how I proceeded to get it working.

The Solution

Tools used:

- Command Line on Windows

- Azure Storage Explorer

- Azure Portal

- AzCopy